Handling Time#

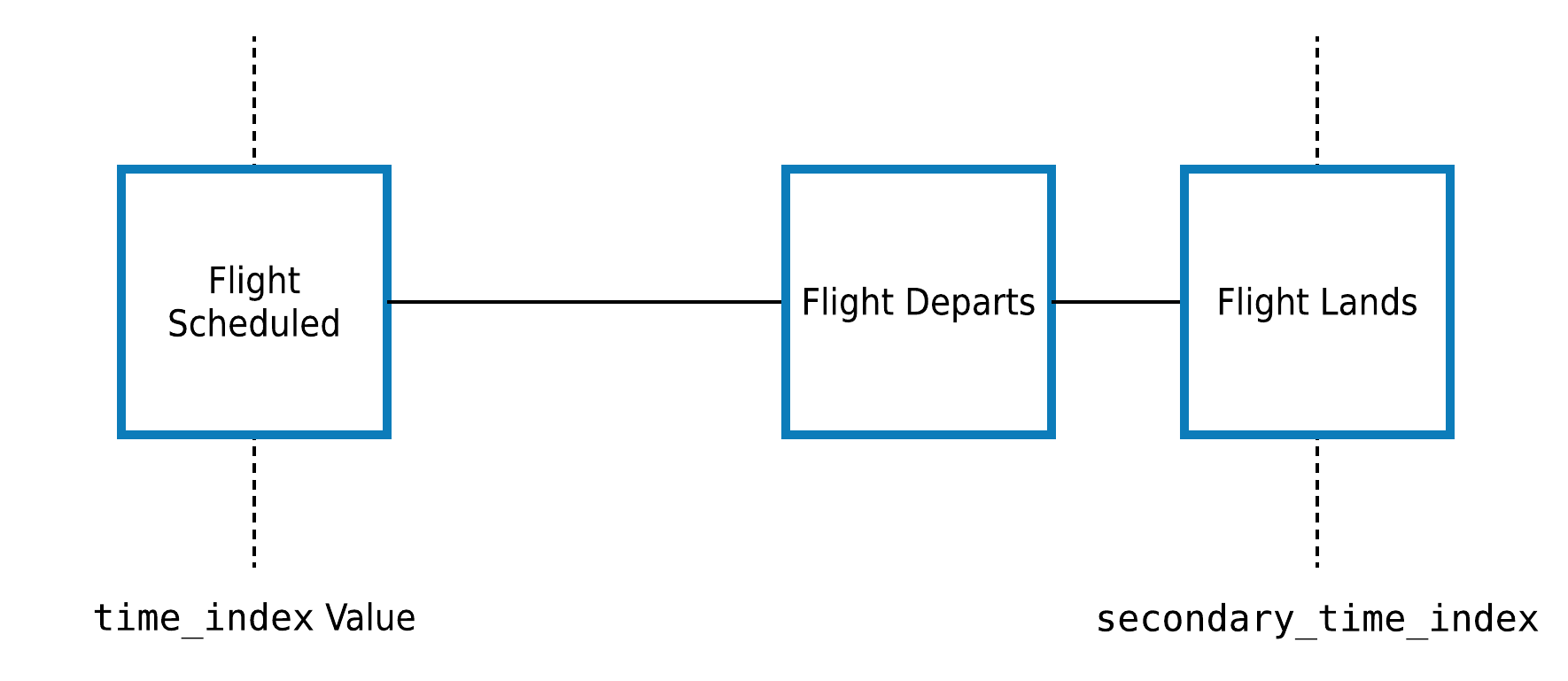

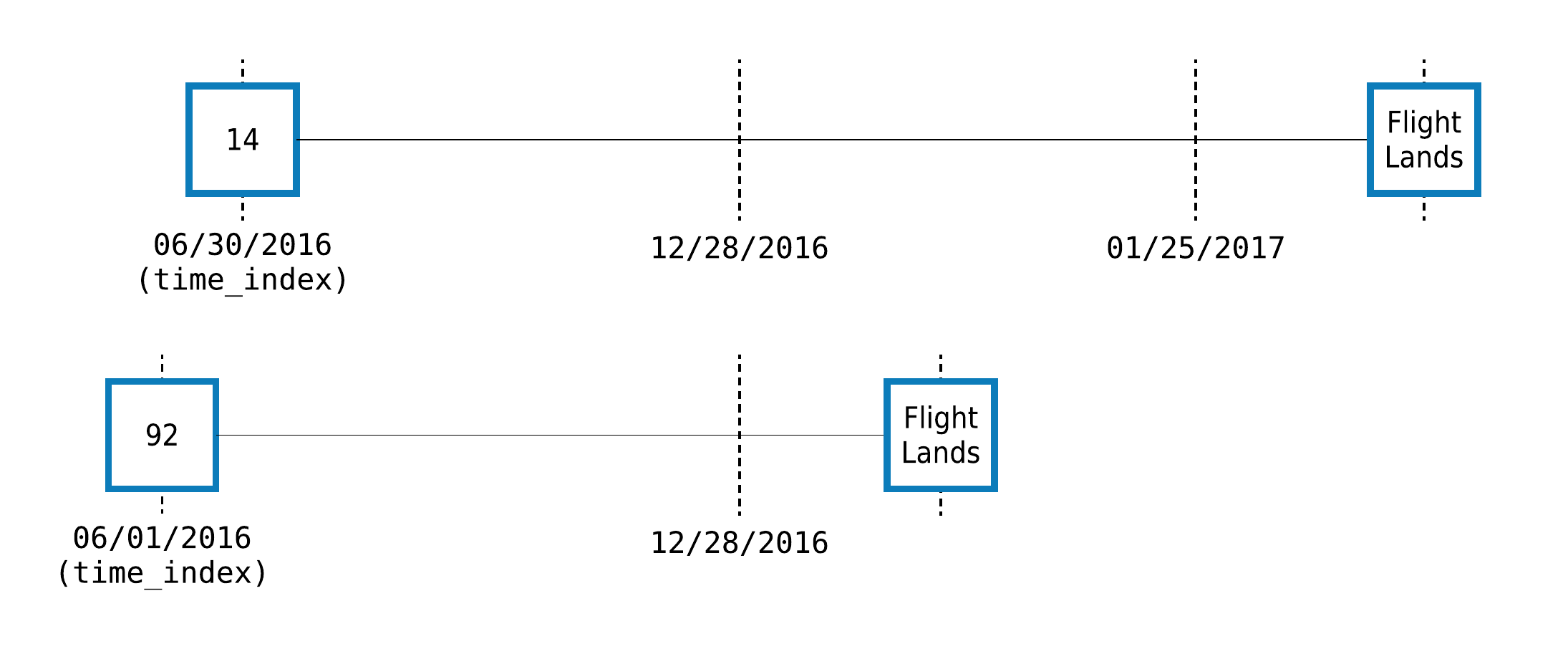

When performing feature engineering with temporal data, carefully selecting the data that is used for any calculation is paramount. By annotating dataframes with a Woodwork time index column and providing a cutoff time during feature calculation, Featuretools will automatically filter out any data after the cutoff time before running any calculations.

Note

This guide focuses on performing feature engineering on temporal data, but it is not specific to feature engineering for time series problems, which are their own class of machine learning problems. A guide on using Featuretools for time series feature engineering can be found here.

What is the Time Index?#

The time index is the column in the data that specifies when the data in each row became known. For example, let’s examine a table of customer transactions:

[2]:

import featuretools as ft

es = ft.demo.load_mock_customer(return_entityset=True, random_seed=0)

es["transactions"].head()

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/woodwork/type_sys/utils.py:33: UserWarning: Could not infer format, so each element will be parsed individually, falling back to `dateutil`. To ensure parsing is consistent and as-expected, please specify a format.

pd.to_datetime(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/woodwork/type_sys/utils.py:33: UserWarning: Could not infer format, so each element will be parsed individually, falling back to `dateutil`. To ensure parsing is consistent and as-expected, please specify a format.

pd.to_datetime(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/woodwork/type_sys/utils.py:33: UserWarning: Could not infer format, so each element will be parsed individually, falling back to `dateutil`. To ensure parsing is consistent and as-expected, please specify a format.

pd.to_datetime(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/woodwork/type_sys/utils.py:33: UserWarning: Could not infer format, so each element will be parsed individually, falling back to `dateutil`. To ensure parsing is consistent and as-expected, please specify a format.

pd.to_datetime(

[2]:

| transaction_id | session_id | transaction_time | product_id | amount | _ft_last_time | |

|---|---|---|---|---|---|---|

| 10 | 10 | 1 | 2014-01-01 00:00:00 | 5 | 127.64 | 2014-01-01 00:00:00 |

| 2 | 2 | 1 | 2014-01-01 00:01:05 | 2 | 109.48 | 2014-01-01 00:01:05 |

| 438 | 438 | 1 | 2014-01-01 00:02:10 | 3 | 95.06 | 2014-01-01 00:02:10 |

| 192 | 192 | 1 | 2014-01-01 00:03:15 | 4 | 78.92 | 2014-01-01 00:03:15 |

| 271 | 271 | 1 | 2014-01-01 00:04:20 | 3 | 31.54 | 2014-01-01 00:04:20 |

In this table, there is one row for every transaction and a transaction_time column that specifies when the transaction took place. This means that transaction_time is the time index because it indicates when the information in each row became known and available for feature calculations. For now, ignore the _ft_last_time column. That is a featuretools-generated column that will be discussed later on.

However, not every datetime column is a time index. Consider the customers dataframe:

[3]:

es["customers"]

[3]:

| customer_id | zip_code | join_date | birthday | _ft_last_time | |

|---|---|---|---|---|---|

| 5 | 5 | 60091 | 2010-07-17 05:27:50 | 1984-07-28 | 2014-01-01 08:09:40 |

| 4 | 4 | 60091 | 2011-04-08 20:08:14 | 2006-08-15 | 2014-01-01 05:31:30 |

| 1 | 1 | 60091 | 2011-04-17 10:48:33 | 1994-07-18 | 2014-01-01 07:26:20 |

| 3 | 3 | 13244 | 2011-08-13 15:42:34 | 2003-11-21 | 2014-01-01 09:00:35 |

| 2 | 2 | 13244 | 2012-04-15 23:31:04 | 1986-08-18 | 2014-01-01 08:23:45 |

Here, we have two time columns, join_date and birthday. While either column might be useful for making features, the join_date should be used as the time index because it indicates when that customer first became available in the dataset.

Important

The time index is defined as the first time that any information from a row can be used. If a cutoff time is specified when calculating features, rows that have a later value for the time index are automatically ignored.

What is the Cutoff Time?#

The cutoff_time specifies the last point in time that a row’s data can be used for a feature calculation. Any data after this point in time will be filtered out before calculating features.

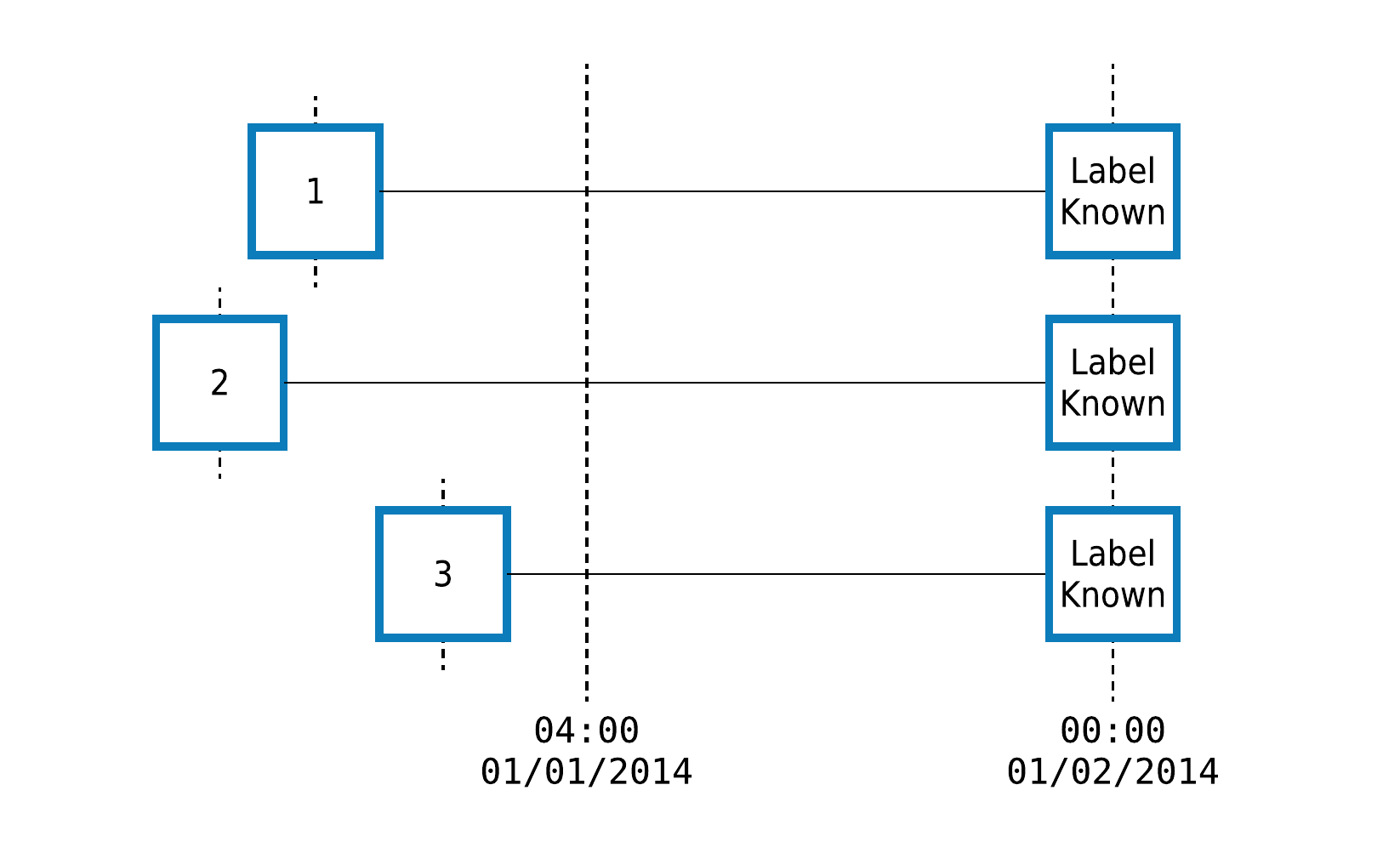

For example, let’s consider a dataset of timestamped customer transactions, where we want to predict whether customers 1, 2 and 3 will spend $500 between 04:00 on January 1 and the end of the day. When building features for this prediction problem, we need to ensure that no data after 04:00 is used in our calculations.

We pass the cutoff time to featuretools.dfs() or featuretools.calculate_feature_matrix() using the cutoff_time argument like this:

[4]:

fm, features = ft.dfs(

entityset=es,

target_dataframe_name="customers",

cutoff_time=pd.Timestamp("2014-1-1 04:00"),

instance_ids=[1, 2, 3],

cutoff_time_in_index=True,

)

fm

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function max at 0x7f99e0108790> is currently using SeriesGroupBy.max. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "max" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function max at 0x7f99e0108790> is currently using SeriesGroupBy.max. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "max" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function max at 0x7f99e0108790> is currently using SeriesGroupBy.max. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "max" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function max at 0x7f99e0108790> is currently using SeriesGroupBy.max. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "max" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

[4]:

| zip_code | COUNT(sessions) | MODE(sessions.device) | NUM_UNIQUE(sessions.device) | COUNT(transactions) | MAX(transactions.amount) | MEAN(transactions.amount) | MIN(transactions.amount) | MODE(transactions.product_id) | NUM_UNIQUE(transactions.product_id) | SKEW(transactions.amount) | STD(transactions.amount) | SUM(transactions.amount) | DAY(birthday) | DAY(join_date) | MONTH(birthday) | MONTH(join_date) | WEEKDAY(birthday) | WEEKDAY(join_date) | YEAR(birthday) | YEAR(join_date) | MAX(sessions.COUNT(transactions)) | MAX(sessions.MEAN(transactions.amount)) | MAX(sessions.MIN(transactions.amount)) | MAX(sessions.NUM_UNIQUE(transactions.product_id)) | MAX(sessions.SKEW(transactions.amount)) | MAX(sessions.STD(transactions.amount)) | MAX(sessions.SUM(transactions.amount)) | MEAN(sessions.COUNT(transactions)) | MEAN(sessions.MAX(transactions.amount)) | MEAN(sessions.MEAN(transactions.amount)) | MEAN(sessions.MIN(transactions.amount)) | MEAN(sessions.NUM_UNIQUE(transactions.product_id)) | MEAN(sessions.SKEW(transactions.amount)) | MEAN(sessions.STD(transactions.amount)) | MEAN(sessions.SUM(transactions.amount)) | MIN(sessions.COUNT(transactions)) | MIN(sessions.MAX(transactions.amount)) | MIN(sessions.MEAN(transactions.amount)) | MIN(sessions.NUM_UNIQUE(transactions.product_id)) | MIN(sessions.SKEW(transactions.amount)) | MIN(sessions.STD(transactions.amount)) | MIN(sessions.SUM(transactions.amount)) | MODE(sessions.DAY(session_start)) | MODE(sessions.MODE(transactions.product_id)) | MODE(sessions.MONTH(session_start)) | MODE(sessions.WEEKDAY(session_start)) | MODE(sessions.YEAR(session_start)) | NUM_UNIQUE(sessions.DAY(session_start)) | NUM_UNIQUE(sessions.MODE(transactions.product_id)) | NUM_UNIQUE(sessions.MONTH(session_start)) | NUM_UNIQUE(sessions.WEEKDAY(session_start)) | NUM_UNIQUE(sessions.YEAR(session_start)) | SKEW(sessions.COUNT(transactions)) | SKEW(sessions.MAX(transactions.amount)) | SKEW(sessions.MEAN(transactions.amount)) | SKEW(sessions.MIN(transactions.amount)) | SKEW(sessions.NUM_UNIQUE(transactions.product_id)) | SKEW(sessions.STD(transactions.amount)) | SKEW(sessions.SUM(transactions.amount)) | STD(sessions.COUNT(transactions)) | STD(sessions.MAX(transactions.amount)) | STD(sessions.MEAN(transactions.amount)) | STD(sessions.MIN(transactions.amount)) | STD(sessions.NUM_UNIQUE(transactions.product_id)) | STD(sessions.SKEW(transactions.amount)) | STD(sessions.SUM(transactions.amount)) | SUM(sessions.MAX(transactions.amount)) | SUM(sessions.MEAN(transactions.amount)) | SUM(sessions.MIN(transactions.amount)) | SUM(sessions.NUM_UNIQUE(transactions.product_id)) | SUM(sessions.SKEW(transactions.amount)) | SUM(sessions.STD(transactions.amount)) | MODE(transactions.sessions.device) | NUM_UNIQUE(transactions.sessions.device) | ||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| customer_id | time | |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| 1 | 2014-01-01 04:00:00 | 60091 | 4 | tablet | 3 | 67 | 139.23 | 74.002836 | 5.81 | 4 | 5 | -0.006928 | 42.309717 | 4958.19 | 18 | 17 | 7 | 4 | 0 | 6 | 1994 | 2011 | 25.0 | 85.469167 | 8.74 | 5.0 | 0.234349 | 46.905665 | 1613.93 | 16.75 | 135.0100 | 76.150425 | 6.905 | 5.0 | -0.126261 | 42.393218 | 1239.5475 | 12.0 | 129.00 | 64.557200 | 5.0 | -0.830975 | 39.825249 | 1025.63 | 1 | 4 | 1 | 2 | 2014 | 1 | 3 | 1 | 1 | 1 | 1.614843 | -0.451371 | -0.233453 | 1.452325 | 0.0 | 1.235445 | 1.197406 | 5.678908 | 5.027226 | 10.426572 | 1.285833 | 0.0 | 0.500353 | 271.917637 | 540.04 | 304.601700 | 27.62 | 20.0 | -0.505043 | 169.572874 | tablet | 3 |

| 2 | 2014-01-01 04:00:00 | 13244 | 4 | desktop | 2 | 49 | 146.81 | 84.700000 | 12.07 | 4 | 5 | -0.134786 | 39.289512 | 4150.30 | 18 | 15 | 8 | 4 | 0 | 6 | 1986 | 2012 | 16.0 | 96.581000 | 56.46 | 5.0 | 0.295458 | 47.935920 | 1320.64 | 12.25 | 142.3225 | 85.197948 | 26.310 | 5.0 | 0.011293 | 39.315685 | 1037.5750 | 8.0 | 138.38 | 76.813125 | 5.0 | -0.455197 | 27.839228 | 634.84 | 1 | 2 | 1 | 2 | 2014 | 1 | 3 | 1 | 1 | 1 | -0.169238 | 0.459305 | 0.651941 | 1.815491 | 0.0 | -0.966834 | -0.823347 | 3.862210 | 3.470527 | 8.983533 | 20.424007 | 0.0 | 0.324809 | 307.743859 | 569.29 | 340.791792 | 105.24 | 20.0 | 0.045171 | 157.262738 | desktop | 2 |

| 3 | 2014-01-01 04:00:00 | 13244 | 1 | tablet | 1 | 15 | 146.31 | 62.791333 | 8.19 | 1 | 5 | 0.618455 | 47.264797 | 941.87 | 21 | 13 | 11 | 8 | 4 | 5 | 2003 | 2011 | 15.0 | 62.791333 | 8.19 | 5.0 | 0.618455 | 47.264797 | 941.87 | 15.00 | 146.3100 | 62.791333 | 8.190 | 5.0 | 0.618455 | 47.264797 | 941.8700 | 15.0 | 146.31 | 62.791333 | 5.0 | 0.618455 | 47.264797 | 941.87 | 1 | 1 | 1 | 2 | 2014 | 1 | 1 | 1 | 1 | 1 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | 146.31 | 62.791333 | 8.19 | 5.0 | 0.618455 | 47.264797 | tablet | 1 |

Even though the entityset contains the complete transaction history for each customer, only data with a time index up to and including the cutoff time was used to calculate the features above.

Using a Cutoff Time DataFrame#

Oftentimes, the training examples for machine learning will come from different points in time. To specify a unique cutoff time for each row of the resulting feature matrix, we can pass a dataframe which includes one column for the instance id and another column for the corresponding cutoff time. These columns can be in any order, but they must be named properly. The column with the instance ids must either be named instance_id or have the same name as the target dataframe index. The

column with the cutoff time values must either be named time or have the same name as the target dataframe time_index.

The column names for the instance ids and the cutoff time values should be unambiguous. Passing a dataframe that contains both a column with the same name as the target dataframe index and a column named instance_id will result in an error. Similarly, if the cutoff time dataframe contains both a column with the same name as the target dataframe time_index and a column named time an error will be raised.

Note

Only the columns corresponding to the instance ids and the cutoff times are used to calculate features. Any additional columns passed through are appended to the resulting feature matrix. This is typically used to pass through machine learning labels to ensure that they stay aligned with the feature matrix.

[5]:

cutoff_times = pd.DataFrame()

cutoff_times["customer_id"] = [1, 2, 3, 1]

cutoff_times["time"] = pd.to_datetime(

["2014-1-1 04:00", "2014-1-1 05:00", "2014-1-1 06:00", "2014-1-1 08:00"]

)

cutoff_times["label"] = [True, True, False, True]

cutoff_times

fm, features = ft.dfs(

entityset=es,

target_dataframe_name="customers",

cutoff_time=cutoff_times,

cutoff_time_in_index=True,

)

fm

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function max at 0x7f99e0108790> is currently using SeriesGroupBy.max. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "max" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function max at 0x7f99e0108790> is currently using SeriesGroupBy.max. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "max" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function max at 0x7f99e0108790> is currently using SeriesGroupBy.max. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "max" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function max at 0x7f99e0108790> is currently using SeriesGroupBy.max. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "max" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function max at 0x7f99e0108790> is currently using SeriesGroupBy.max. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "max" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function max at 0x7f99e0108790> is currently using SeriesGroupBy.max. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "max" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function max at 0x7f99e0108790> is currently using SeriesGroupBy.max. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "max" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function max at 0x7f99e0108790> is currently using SeriesGroupBy.max. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "max" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function max at 0x7f99e0108790> is currently using SeriesGroupBy.max. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "max" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function max at 0x7f99e0108790> is currently using SeriesGroupBy.max. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "max" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function max at 0x7f99e0108790> is currently using SeriesGroupBy.max. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "max" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function max at 0x7f99e0108790> is currently using SeriesGroupBy.max. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "max" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function max at 0x7f99e0108790> is currently using SeriesGroupBy.max. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "max" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function max at 0x7f99e0108790> is currently using SeriesGroupBy.max. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "max" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function max at 0x7f99e0108790> is currently using SeriesGroupBy.max. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "max" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function max at 0x7f99e0108790> is currently using SeriesGroupBy.max. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "max" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

[5]:

| zip_code | COUNT(sessions) | MODE(sessions.device) | NUM_UNIQUE(sessions.device) | COUNT(transactions) | MAX(transactions.amount) | MEAN(transactions.amount) | MIN(transactions.amount) | MODE(transactions.product_id) | NUM_UNIQUE(transactions.product_id) | SKEW(transactions.amount) | STD(transactions.amount) | SUM(transactions.amount) | DAY(birthday) | DAY(join_date) | MONTH(birthday) | MONTH(join_date) | WEEKDAY(birthday) | WEEKDAY(join_date) | YEAR(birthday) | YEAR(join_date) | MAX(sessions.COUNT(transactions)) | MAX(sessions.MEAN(transactions.amount)) | MAX(sessions.MIN(transactions.amount)) | MAX(sessions.NUM_UNIQUE(transactions.product_id)) | MAX(sessions.SKEW(transactions.amount)) | MAX(sessions.STD(transactions.amount)) | MAX(sessions.SUM(transactions.amount)) | MEAN(sessions.COUNT(transactions)) | MEAN(sessions.MAX(transactions.amount)) | MEAN(sessions.MEAN(transactions.amount)) | MEAN(sessions.MIN(transactions.amount)) | MEAN(sessions.NUM_UNIQUE(transactions.product_id)) | MEAN(sessions.SKEW(transactions.amount)) | MEAN(sessions.STD(transactions.amount)) | MEAN(sessions.SUM(transactions.amount)) | MIN(sessions.COUNT(transactions)) | MIN(sessions.MAX(transactions.amount)) | MIN(sessions.MEAN(transactions.amount)) | MIN(sessions.NUM_UNIQUE(transactions.product_id)) | MIN(sessions.SKEW(transactions.amount)) | MIN(sessions.STD(transactions.amount)) | MIN(sessions.SUM(transactions.amount)) | MODE(sessions.DAY(session_start)) | MODE(sessions.MODE(transactions.product_id)) | MODE(sessions.MONTH(session_start)) | MODE(sessions.WEEKDAY(session_start)) | MODE(sessions.YEAR(session_start)) | NUM_UNIQUE(sessions.DAY(session_start)) | NUM_UNIQUE(sessions.MODE(transactions.product_id)) | NUM_UNIQUE(sessions.MONTH(session_start)) | NUM_UNIQUE(sessions.WEEKDAY(session_start)) | NUM_UNIQUE(sessions.YEAR(session_start)) | SKEW(sessions.COUNT(transactions)) | SKEW(sessions.MAX(transactions.amount)) | SKEW(sessions.MEAN(transactions.amount)) | SKEW(sessions.MIN(transactions.amount)) | SKEW(sessions.NUM_UNIQUE(transactions.product_id)) | SKEW(sessions.STD(transactions.amount)) | SKEW(sessions.SUM(transactions.amount)) | STD(sessions.COUNT(transactions)) | STD(sessions.MAX(transactions.amount)) | STD(sessions.MEAN(transactions.amount)) | STD(sessions.MIN(transactions.amount)) | STD(sessions.NUM_UNIQUE(transactions.product_id)) | STD(sessions.SKEW(transactions.amount)) | STD(sessions.SUM(transactions.amount)) | SUM(sessions.MAX(transactions.amount)) | SUM(sessions.MEAN(transactions.amount)) | SUM(sessions.MIN(transactions.amount)) | SUM(sessions.NUM_UNIQUE(transactions.product_id)) | SUM(sessions.SKEW(transactions.amount)) | SUM(sessions.STD(transactions.amount)) | MODE(transactions.sessions.device) | NUM_UNIQUE(transactions.sessions.device) | label | ||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| customer_id | time | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| 1 | 2014-01-01 04:00:00 | 60091 | 4 | tablet | 3 | 67 | 139.23 | 74.002836 | 5.81 | 4 | 5 | -0.006928 | 42.309717 | 4958.19 | 18 | 17 | 7 | 4 | 0 | 6 | 1994 | 2011 | 25.0 | 85.469167 | 8.74 | 5.0 | 0.234349 | 46.905665 | 1613.93 | 16.75 | 135.01000 | 76.150425 | 6.90500 | 5.0 | -0.126261 | 42.393218 | 1239.5475 | 12.0 | 129.00 | 64.557200 | 5.0 | -0.830975 | 39.825249 | 1025.63 | 1 | 4 | 1 | 2 | 2014 | 1 | 3 | 1 | 1 | 1 | 1.614843 | -0.451371 | -0.233453 | 1.452325 | 0.0 | 1.235445 | 1.197406 | 5.678908 | 5.027226 | 10.426572 | 1.285833 | 0.0 | 0.500353 | 271.917637 | 540.04 | 304.601700 | 27.62 | 20.0 | -0.505043 | 169.572874 | tablet | 3 | True |

| 2 | 2014-01-01 05:00:00 | 13244 | 5 | desktop | 2 | 62 | 146.81 | 83.149355 | 12.07 | 4 | 5 | -0.121811 | 38.047944 | 5155.26 | 18 | 15 | 8 | 4 | 0 | 6 | 1986 | 2012 | 16.0 | 96.581000 | 56.46 | 5.0 | 0.295458 | 47.935920 | 1320.64 | 12.40 | 137.62800 | 83.619281 | 25.41200 | 5.0 | -0.053949 | 38.197555 | 1031.0520 | 8.0 | 118.85 | 76.813125 | 5.0 | -0.455197 | 27.839228 | 634.84 | 1 | 2 | 1 | 2 | 2014 | 1 | 4 | 1 | 1 | 1 | -0.379092 | -1.814717 | 1.082192 | 1.959531 | 0.0 | -0.213518 | -0.667256 | 3.361547 | 10.919023 | 8.543351 | 17.801322 | 0.0 | 0.316873 | 266.912832 | 688.14 | 418.096407 | 127.06 | 25.0 | -0.269747 | 190.987775 | desktop | 2 | True |

| 3 | 2014-01-01 06:00:00 | 13244 | 4 | desktop | 2 | 44 | 146.31 | 65.174773 | 6.65 | 1 | 5 | 0.318315 | 40.349758 | 2867.69 | 21 | 13 | 11 | 8 | 4 | 5 | 2003 | 2011 | 17.0 | 91.760000 | 91.76 | 5.0 | 0.618455 | 47.264797 | 944.85 | 11.00 | 123.26750 | 72.742004 | 31.66500 | 4.0 | 0.286859 | 39.712232 | 716.9225 | 1.0 | 91.76 | 55.579412 | 1.0 | -0.289466 | 35.704680 | 91.76 | 1 | 1 | 1 | 2 | 2014 | 1 | 2 | 1 | 1 | 1 | -1.330938 | -1.060639 | 0.201588 | 1.874170 | -2.0 | 1.722323 | -1.977878 | 7.118052 | 22.808351 | 16.540737 | 40.508892 | 2.0 | 0.500999 | 417.557763 | 493.07 | 290.968018 | 126.66 | 16.0 | 0.860577 | 119.136697 | desktop | 2 | False |

| 1 | 2014-01-01 08:00:00 | 60091 | 8 | mobile | 3 | 126 | 139.43 | 71.631905 | 5.81 | 4 | 5 | 0.019698 | 40.442059 | 9025.62 | 18 | 17 | 7 | 4 | 0 | 6 | 1994 | 2011 | 25.0 | 88.755625 | 26.36 | 5.0 | 0.640252 | 46.905665 | 1613.93 | 15.75 | 132.24625 | 72.774140 | 9.82375 | 5.0 | -0.059515 | 39.093244 | 1128.2025 | 12.0 | 118.90 | 50.623125 | 5.0 | -1.038434 | 30.450261 | 809.97 | 1 | 4 | 1 | 2 | 2014 | 1 | 4 | 1 | 1 | 1 | 1.946018 | -0.780493 | -0.424949 | 2.440005 | 0.0 | -0.312355 | 0.778170 | 4.062019 | 7.322191 | 13.759314 | 6.954507 | 0.0 | 0.589386 | 279.510713 | 1057.97 | 582.193117 | 78.59 | 40.0 | -0.476122 | 312.745952 | mobile | 3 | True |

We can now see that every row of the feature matrix is calculated at the corresponding time in the cutoff time dataframe. Because we calculate each row at a different time, it is possible to have a repeat customer. In this case, we calculated the feature vector for customer 1 at both 04:00 and 08:00.

Training Window#

By default, all data up to and including the cutoff time is used. We can restrict the amount of historical data that is selected for calculations using a “training window.”

Here’s an example of using a two hour training window:

[6]:

window_fm, window_features = ft.dfs(

entityset=es,

target_dataframe_name="customers",

cutoff_time=cutoff_times,

cutoff_time_in_index=True,

training_window="2 hour",

)

window_fm

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function max at 0x7f99e0108790> is currently using SeriesGroupBy.max. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "max" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function min at 0x7f99e01088b0> is currently using SeriesGroupBy.min. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "min" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function max at 0x7f99e0108790> is currently using SeriesGroupBy.max. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "max" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function mean at 0x7f99e010d0d0> is currently using SeriesGroupBy.mean. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "mean" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function sum at 0x7f99e0108160> is currently using SeriesGroupBy.sum. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "sum" instead.

to_merge = base_frame.groupby(

/home/docs/checkouts/readthedocs.org/user_builds/feature-labs-inc-featuretools/envs/stable/lib/python3.9/site-packages/featuretools/computational_backends/feature_set_calculator.py:781: FutureWarning: The provided callable <function std at 0x7f99e010d1f0> is currently using SeriesGroupBy.std. In a future version of pandas, the provided callable will be used directly. To keep current behavior pass the string "std" instead.

to_merge = base_frame.groupby(